One-Shot Free-View Neural Talking-Head Synthesis for Video Conferencing

Ting-Chun Wang Arun Mallya Ming-Yu Liu

NVIDIA Corporation

CVPR 2021 (Oral)

[Paper] [Dataset] [Video] [Talk]

Abstract

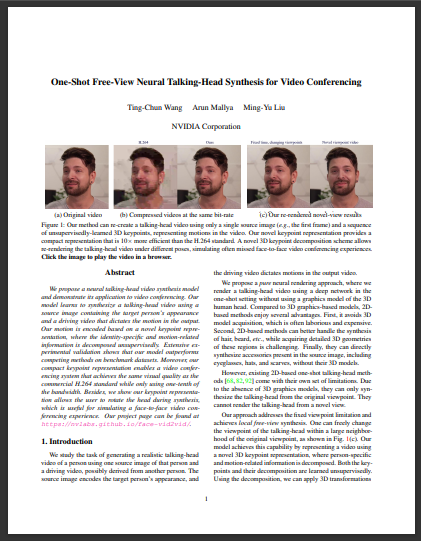

We propose a neural talking-head video synthesis model and demonstrate its application to video conferencing. Our model learns to synthesize a talking-head video using a source image containing the target person's appearance and a driving video that dictates the motion in the output. Our motion is encoded based on a novel keypoint representation, where the identity-specific and motion-related information is decomposed unsupervisedly. Extensive experimental validation shows that our model outperforms competing methods on benchmark datasets. Moreover, our compact keypoint representation enables a video conferencing system that achieves the same visual quality as the commercial H.264 standard while only using one-tenth of the bandwidth. Besides, we show our keypoint representation allows the user to rotate the head during synthesis, which is useful for simulating a face-to-face video conferencing experience.

Paper

Citation

Ting-Chun Wang, Arun Mallya, Ming-Yu Liu. "One-Shot Free-View Neural Talking-Head Synthesis for Video Conferencing."

In CVPR, 2021.

Bibtex

Code

This work is based upon Imaginaire for non-commercial use.

For business inquiries, please visit our website and submit the form: NVIDIA Research Licensing.

Dataset

The dataset we collected to train the model can be downloaded here. Note that the number of videos differ from that in the paper because a different preprocessing script was used to split the videos.

Demo

Please visit this page for an online demo.Our Example Results

|

|

Video Reconstruction

|

|

|

Head Rotation

|

|

|

Face Frontalization

|

|

|

Motion Transfer

|

|

|

Citation

If you find this useful for your research, please use the following.

@inproceedings{wang2021facevid2vid,

title={One-Shot Free-View Neural Talking-Head Synthesis for Video Conferencing},

author={Ting-Chun Wang and Arun Mallya and Ming-Yu Liu},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition},

year={2021}

}