We believe that in order to produce realistic outputs that are consistent over time and viewpoint change, the method must be aware of the 3D structure of the world.

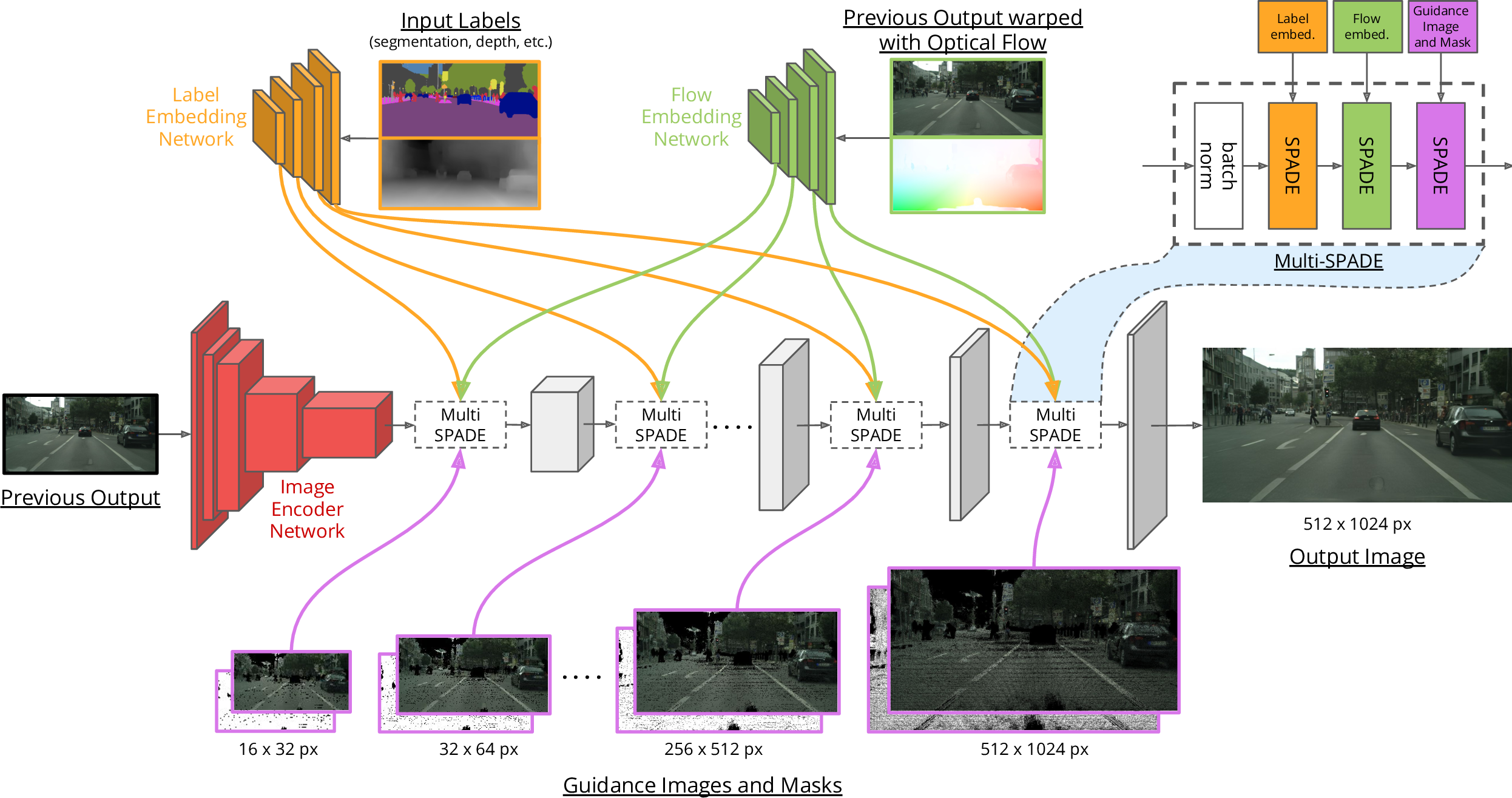

To achieve this, we introduce the concept of guidance images, which are physically-grounded estimates of what the next output frame should look like, based on how the world has been generated so far. As alluded to in their name, the role of these guidance images is to guide the generative model to produce colors and textures that respect previous outputs.

While prior works use optical flow to warp prior outputs, our guidance image differs from this in two aspects.

First, instead of using optical flow, the guidance image should be generated by using the motion field, or scene flow, which describes the true motion of each 3D point in the world. Second, the guidance image should aggregate information from all past viewpoints (and thus frames), instead of only the direct previous frames as in vid2vid. This makes sure that the generated frame is consistent with the entire history.

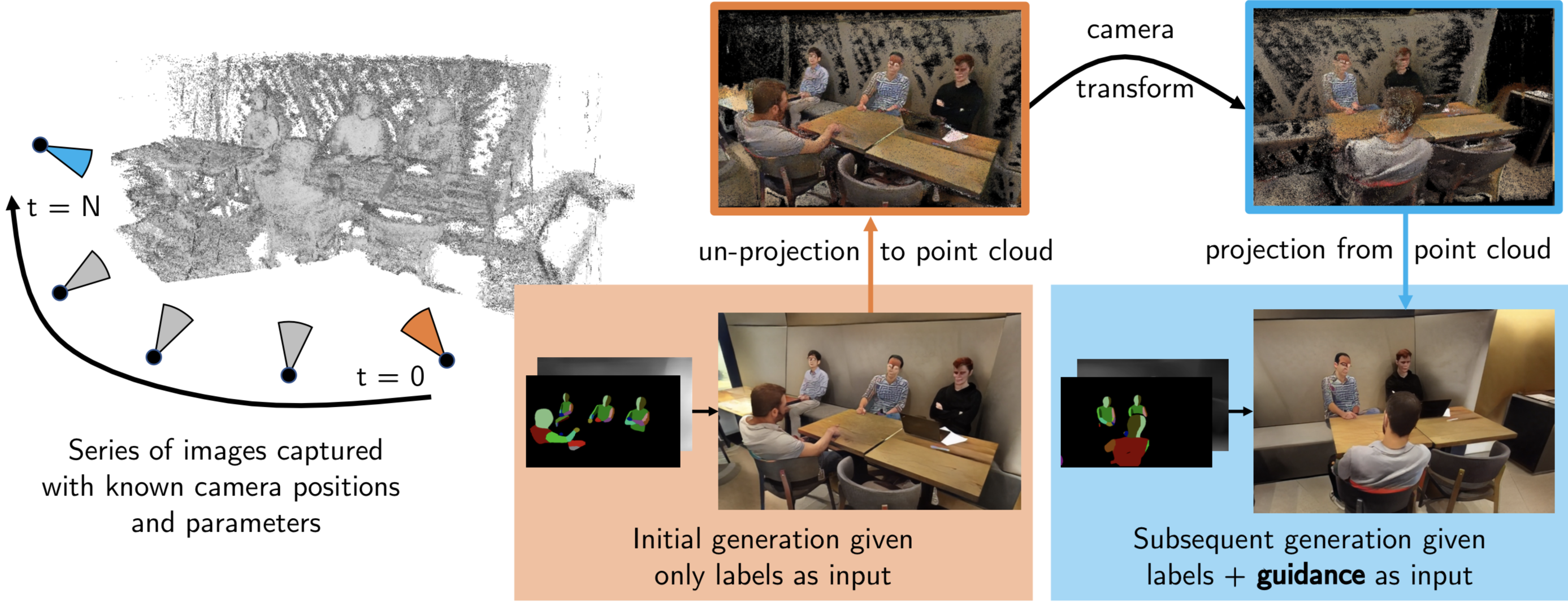

The figure below shows one method to generate guidance images by using point clouds and camera locations obtained by performing Structure from Motion (SfM) on an input video. In case of a game rendering engine, the ground truth scene flow can be obtained and used to generate guidance images.

Generating 3D-aware guidance images

A camera(s) with known parameters and positions travels over time \( t = 0,\cdots,N \). At \( t = 0 \), the scene is textureless and an output image is generated for this viewpoint. The output image is then back-projected to the scene and a guidance image for a subsequent camera position is generated by projecting the partially textured point cloud. Using this guidance image, the generative method can produce an output that is consistent across views and smooth over time.

The guidance image can be noisy, misaligned, and have holes, and the generation method should be robust to such inputs.